When you run backend jobs — duplicate detection, geocode QA, brand matching, ML feature extraction where spatial proximity matters — over tens of millions of places, you hit a scaling wall:

- dense cities blow up: a single coarse hex over NYC can hold hundreds of thousands of places, causing OOM or multi-hour runtimes

- sparse regions waste work: millions of nearly-empty cells become millions of pointless queue items

A fixed-resolution grid can't match the wildly uneven density of real-world place data. What you need is adaptive processing blocks: spatial partitions where every unit has a bounded, predictable cost.

This post describes a pattern in Postgres with the h3-pg extension:

Emit non-overlapping H3 cells such that each cell has

place_count <= threshold.

Dense areas subdivide into finer hexes; sparse areas stay coarse. The output is an adaptive frontier — a cut across H3 resolutions where every place belongs to exactly one emitted cell.

All code and benchmark queries are in the overturemaps-pg repo.

The goal

Given a table of places with a geometry column, produce:

cell (h3index), res (int), place_count (bigint)

…where:

- each place contributes to exactly one emitted cell (no double counting)

- emitted cells do not overlap (safe to process independently)

place_count(cell) <= thresholdby construction

These cells work as queue items, batch inputs, or sharding units for backend processing.

Strict nesting via cell_to_parent

H3 distinguishes logical containment (exact) from geographic containment (approximate). h3_cell_to_parent is a bitwise truncation of the index — the logical hierarchy is exact. Geographic boundaries are a different story (cell boundary polygons don't nest perfectly), but for index-based work that doesn't matter.

We compute the cell once at res 10 and derive all coarser levels via h3_cell_to_parent(). This is both fast (one latlng_to_cell call instead of ten — cell_to_parent is just bit manipulation) and simple (one source of truth for the hierarchy).

The pattern: "emit the coarsest cell under a threshold"

Pick a threshold like 10,000 places.

For each place (really: for each res 10 bucket of places), find the coarsest resolution where the cell count is <= threshold.

- if a res 4 cell has 8,000 places → emit res 4

- if a res 4 cell has 80,000 places → check res 5, then res 6, ... until it fits

That gives you a frontier across resolutions.

Using the cells as processing blocks

The output is a work queue:

- each row is a unit of work (process that cell)

place_countis a cheap proxy for expected cost- the threshold keeps worst-case tasks bounded

A typical pattern:

- materialize the frontier (

REFRESH MATERIALIZED VIEW) - enqueue

cellids into your job system - for each cell, load/process places in that cell, then write results

Handling boundary effects

Any spatial chunking scheme has the same boundary problem: two places can be true duplicates while landing in adjacent cells. If you only compare within the home cell, you miss cross-boundary pairs.

The fix is to process each cell with an overlap buffer using H3's neighbor lookup:

for each cell in frontier:

candidates = places_in(cell) ∪ places_in(gridDisk(cell, 1))

results = find_matches(candidates)

emit only results whose "home" cell == cell

- Load

cell ∪ gridDisk(cell, 1)(ork=2if your matching radius exceeds one hex width). This stays in H3 index space — no geometry intersections. - Only emit results for the home cell so you never double-report.

- Globally dedupe by stable keys as a safety net — e.g.

(min(id_a, id_b), max(id_a, id_b))for pair-based dedup.

The overlap means each job loads ~7x more candidates at k=1 (home cell plus 6 neighbors). In practice the cost is modest because the threshold already caps each cell's count.

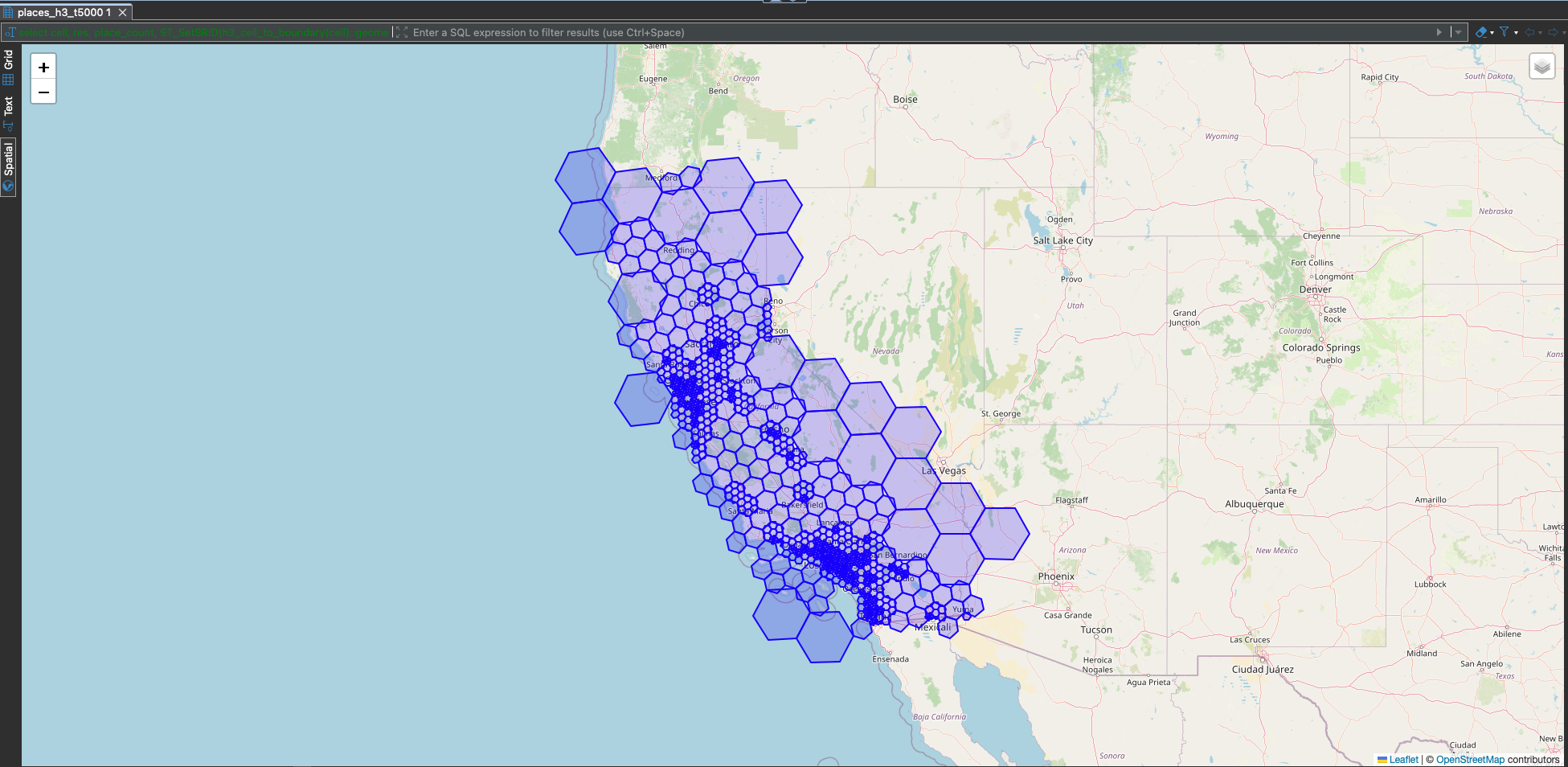

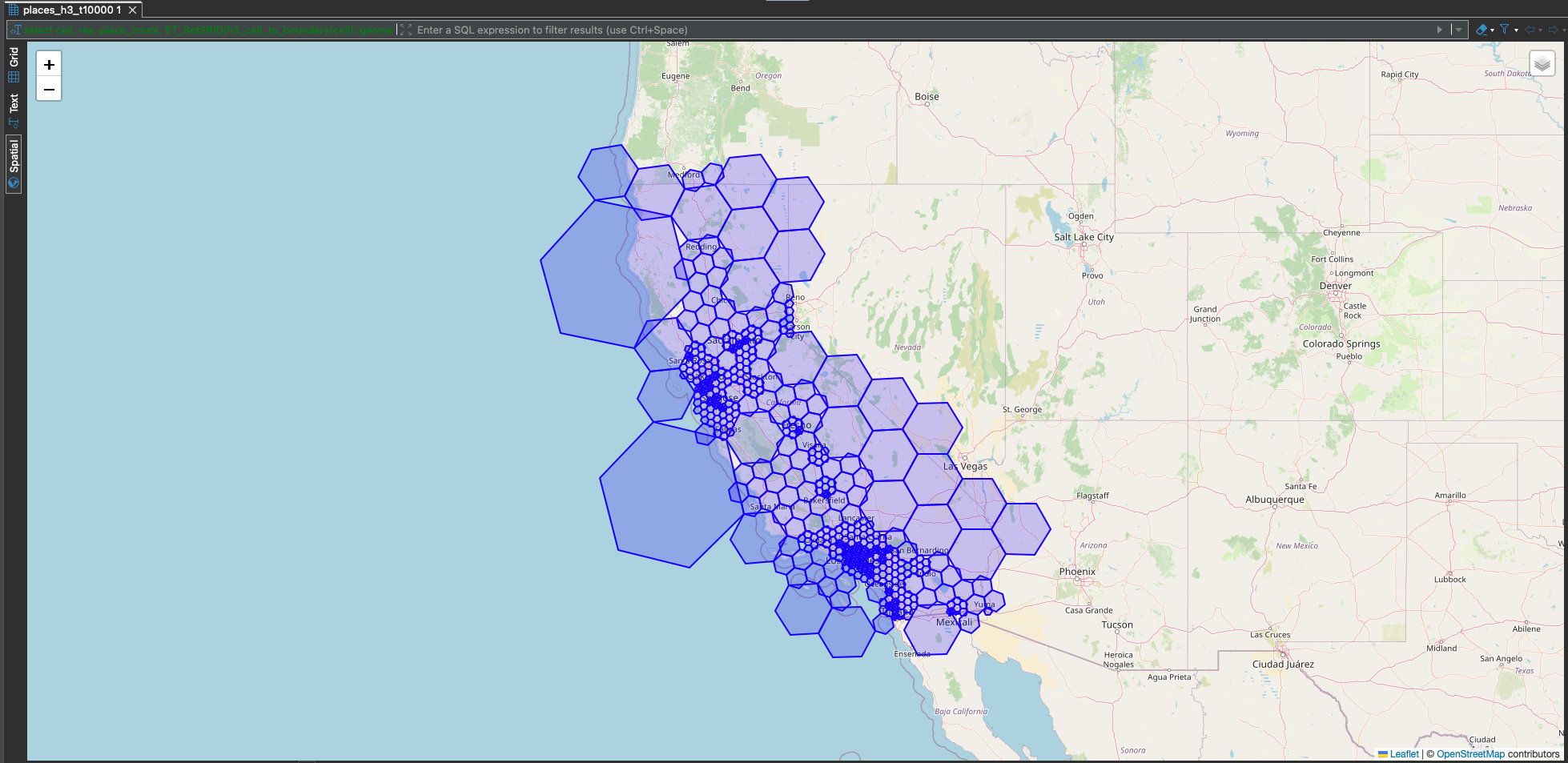

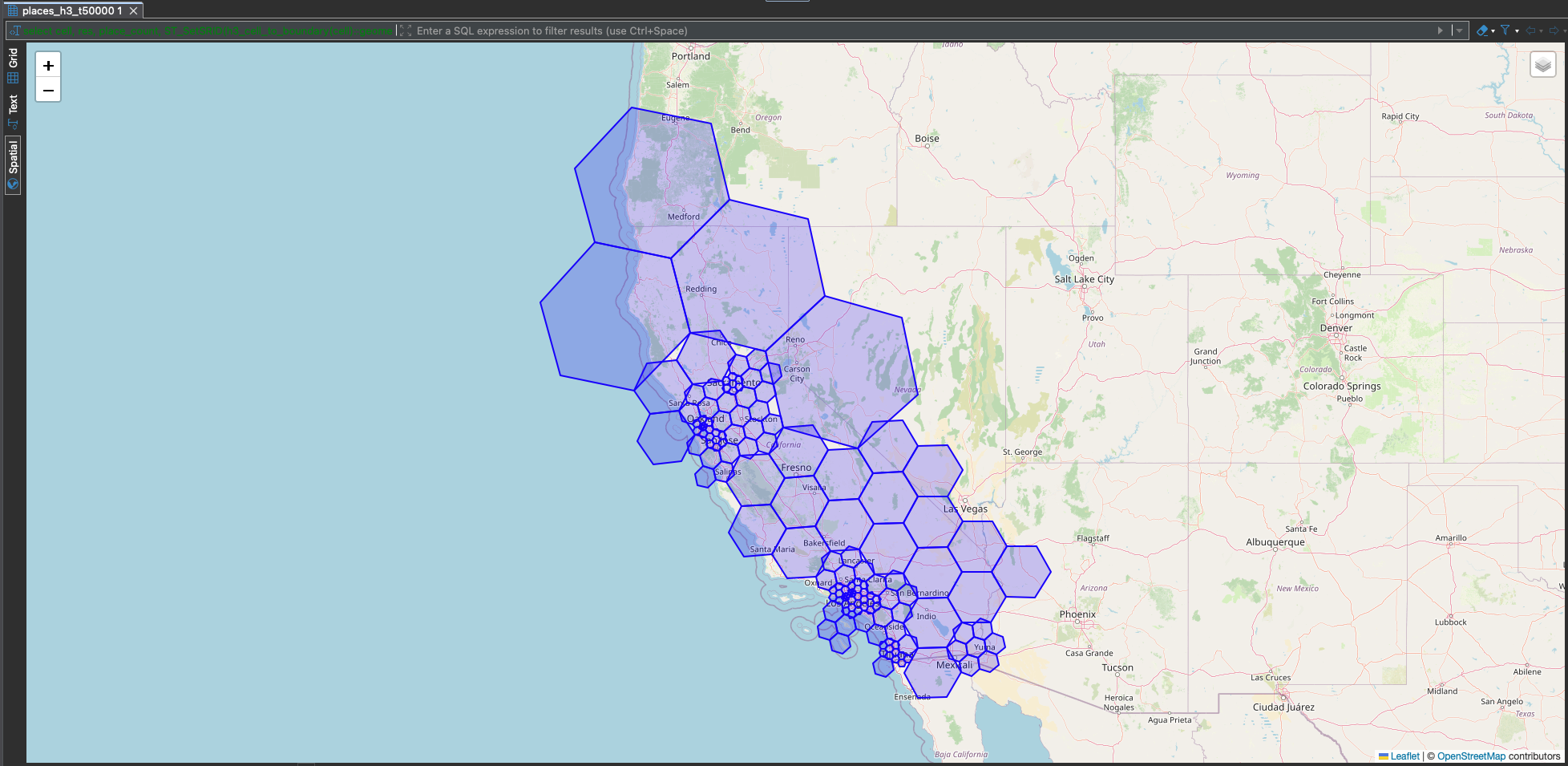

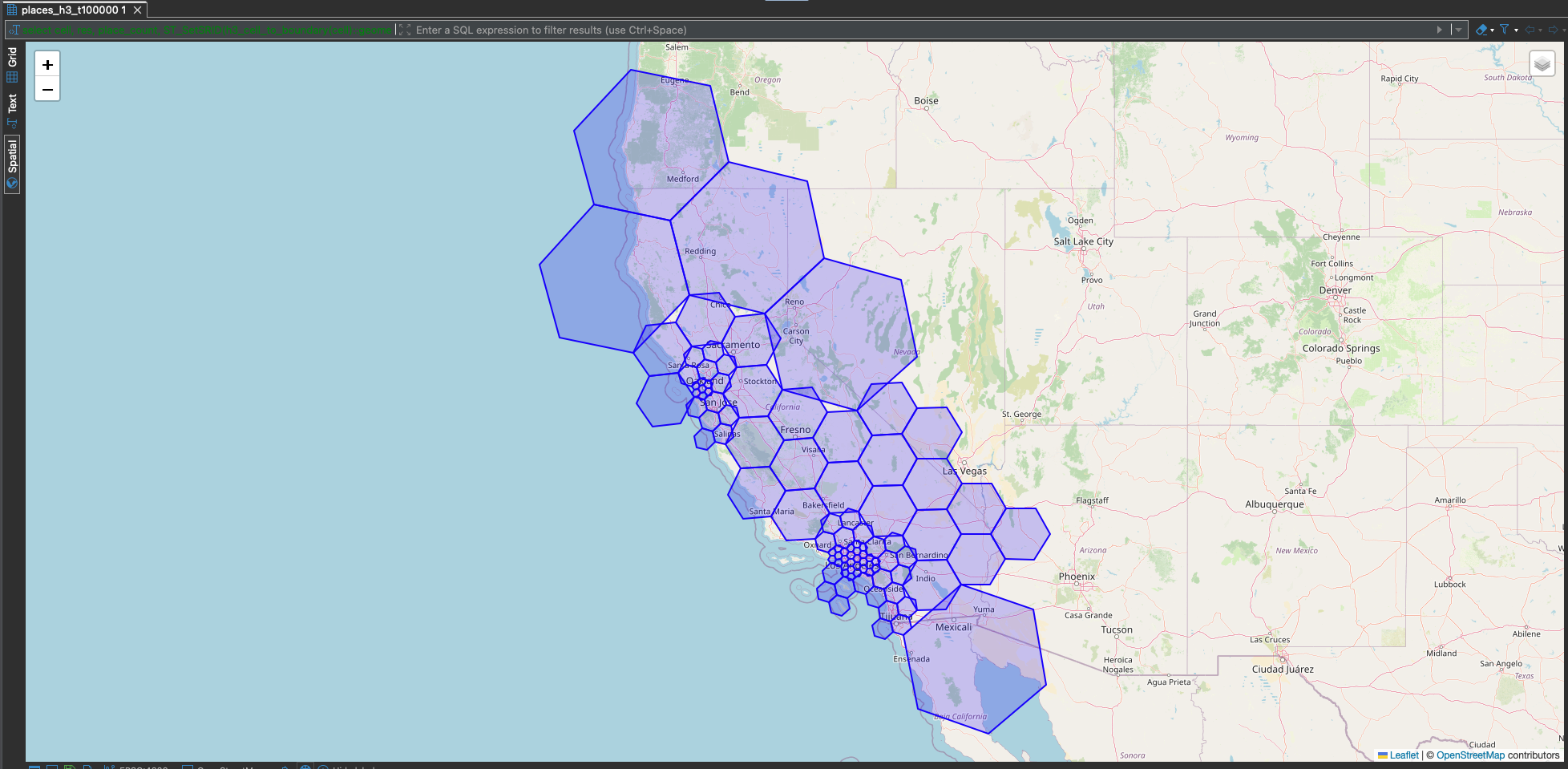

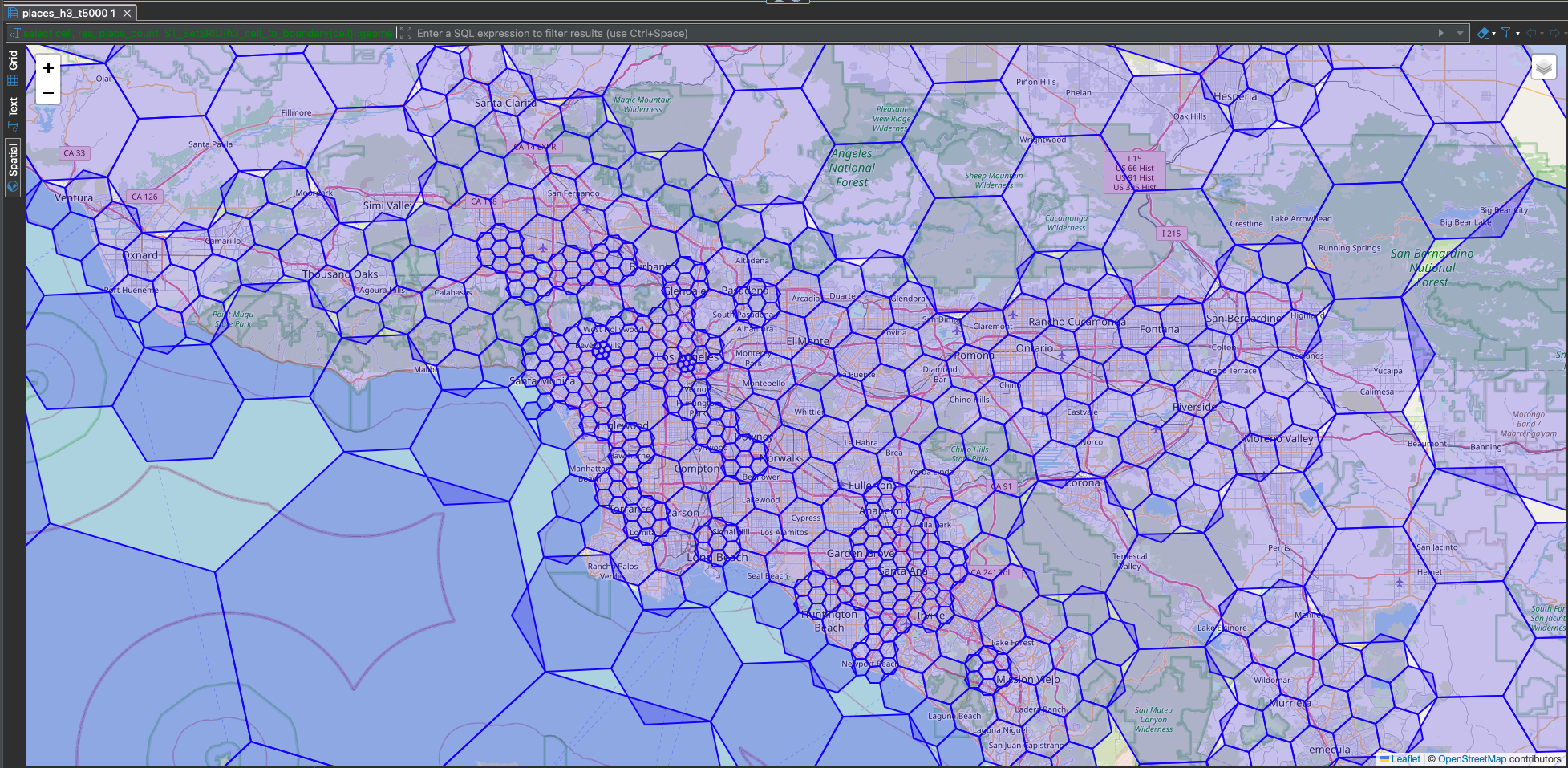

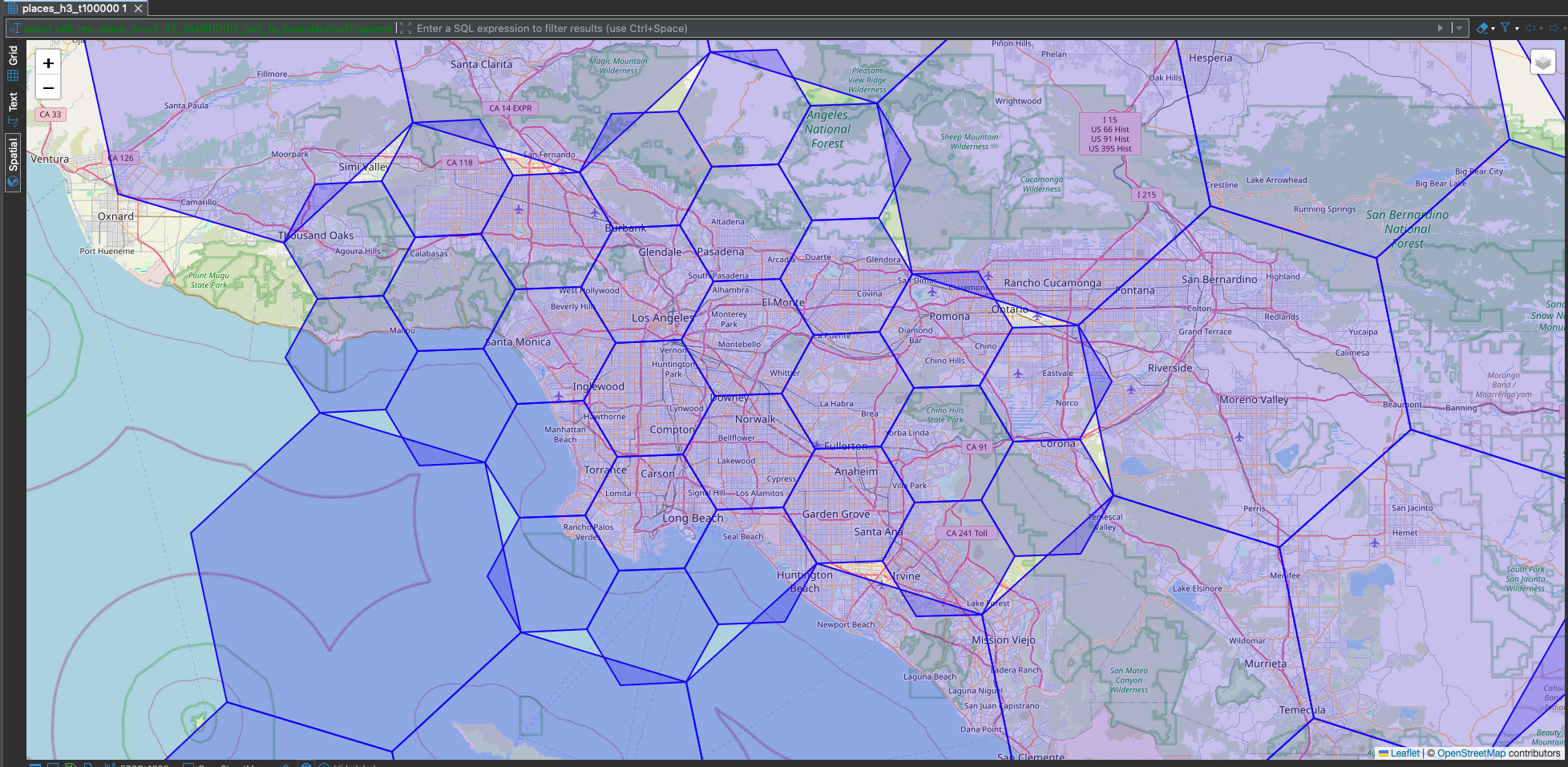

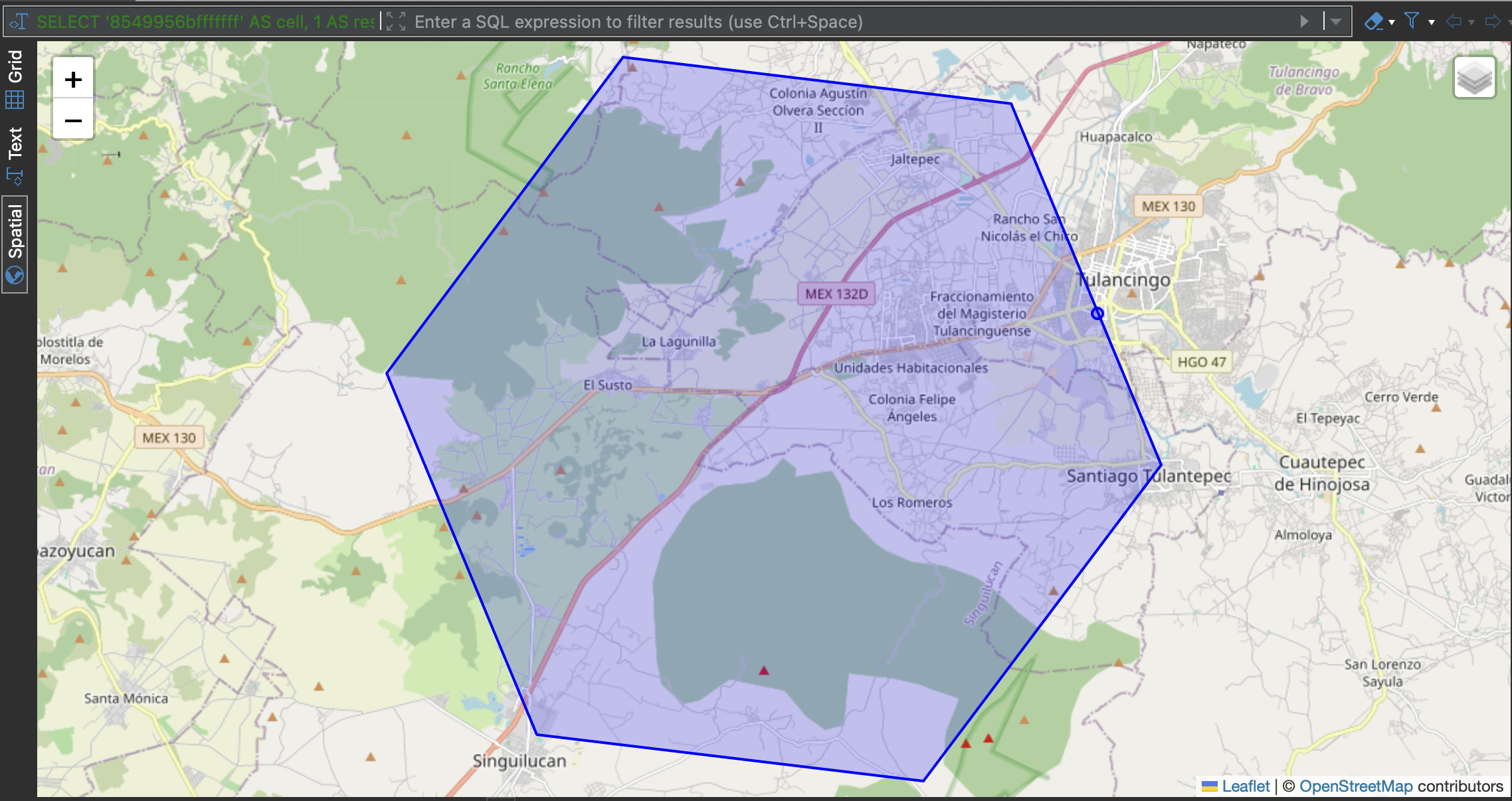

Visualizing the frontier

The processing blocks are the deliverable — but if you want to sanity-check partitions, convert cells to polygons and render them. Color by place_count, by res, or extrude in 3D.

h3_cell_to_boundary is only needed for visualization. All partitioning and overlap logic stays in H3 index space.

Implementation

Requires extensions: postgis, h3, h3_postgis. The view does four things:

- compute

h3_r10once and deriveh3_r1..h3_r9viah3_cell_to_parent - compute counts per cell at each resolution

- join counts back and choose

emit_resvia aCASEladder - group by the emitted cell so each place is counted exactly once

CREATE MATERIALIZED VIEW places_h3_t{threshold} AS

WITH

base AS (

SELECT h3_r10, place_count,

h3_cell_to_parent(h3_r10, 9) AS h3_r9, h3_cell_to_parent(h3_r10, 8) AS h3_r8,

h3_cell_to_parent(h3_r10, 7) AS h3_r7, h3_cell_to_parent(h3_r10, 6) AS h3_r6,

h3_cell_to_parent(h3_r10, 5) AS h3_r5, h3_cell_to_parent(h3_r10, 4) AS h3_r4,

h3_cell_to_parent(h3_r10, 3) AS h3_r3, h3_cell_to_parent(h3_r10, 2) AS h3_r2,

h3_cell_to_parent(h3_r10, 1) AS h3_r1

FROM (

SELECT h3_latlng_to_cell(p.geometry::point, 10) AS h3_r10,

COUNT(*)::bigint AS place_count

FROM places p GROUP BY 1

) r10

),

c1 AS (SELECT h3_r1 AS cell, SUM(place_count)::bigint AS cnt FROM base GROUP BY 1),

c2 AS (SELECT h3_r2 AS cell, SUM(place_count)::bigint AS cnt FROM base GROUP BY 1),

c3 AS (SELECT h3_r3 AS cell, SUM(place_count)::bigint AS cnt FROM base GROUP BY 1),

c4 AS (SELECT h3_r4 AS cell, SUM(place_count)::bigint AS cnt FROM base GROUP BY 1),

c5 AS (SELECT h3_r5 AS cell, SUM(place_count)::bigint AS cnt FROM base GROUP BY 1),

c6 AS (SELECT h3_r6 AS cell, SUM(place_count)::bigint AS cnt FROM base GROUP BY 1),

c7 AS (SELECT h3_r7 AS cell, SUM(place_count)::bigint AS cnt FROM base GROUP BY 1),

c8 AS (SELECT h3_r8 AS cell, SUM(place_count)::bigint AS cnt FROM base GROUP BY 1),

c9 AS (SELECT h3_r9 AS cell, SUM(place_count)::bigint AS cnt FROM base GROUP BY 1),

c10 AS (SELECT h3_r10 AS cell, SUM(place_count)::bigint AS cnt FROM base GROUP BY 1),

tagged AS (

SELECT b.h3_r10,

CASE

WHEN c1.cnt <= {threshold} THEN 1 WHEN c2.cnt <= {threshold} THEN 2

WHEN c3.cnt <= {threshold} THEN 3 WHEN c4.cnt <= {threshold} THEN 4

WHEN c5.cnt <= {threshold} THEN 5 WHEN c6.cnt <= {threshold} THEN 6

WHEN c7.cnt <= {threshold} THEN 7 WHEN c8.cnt <= {threshold} THEN 8

WHEN c9.cnt <= {threshold} THEN 9 ELSE 10

END AS emit_res

FROM base b

JOIN c1 ON b.h3_r1=c1.cell JOIN c2 ON b.h3_r2=c2.cell JOIN c3 ON b.h3_r3=c3.cell

JOIN c4 ON b.h3_r4=c4.cell JOIN c5 ON b.h3_r5=c5.cell JOIN c6 ON b.h3_r6=c6.cell

JOIN c7 ON b.h3_r7=c7.cell JOIN c8 ON b.h3_r8=c8.cell JOIN c9 ON b.h3_r9=c9.cell

JOIN c10 ON b.h3_r10=c10.cell

)

SELECT

h3_cell_to_parent(t.h3_r10, t.emit_res) AS cell,

t.emit_res AS res,

SUM(b.place_count)::bigint AS place_count

FROM base b

JOIN tagged t ON b.h3_r10 = t.h3_r10

GROUP BY 1, 2;

Why "res 10 then parent"

Two reasons.

Performance. h3_latlng_to_cell does real work (face lookup, coordinate projection, digit computation). h3_cell_to_parent is nearly free — it's a bitwise truncation. For 73M places, that's 1 expensive call + 9 cheap calls instead of 10 expensive calls per point.

Correctness. H3's logical hierarchy is exact: cell_to_parent always gives you the one true ancestor. But latlng_to_cell is a geometric classification step — it projects a point onto an icosahedral face and assigns it to the nearest cell center. H3's docs are silent on whether latlng_to_cell(P, 6) is guaranteed to equal cell_to_parent(latlng_to_cell(P, 7), 6) for every point. By deriving all resolutions from a single res 10 cell, we sidestep the question entirely.

Note: if you need metric-distance buffers (e.g. "all places within 500m"), use PostGIS geography (geodesic) or H3 gridDisk neighbors, not EPSG:3857 planar math.

Validation

The benchmark suite runs two checks against the view:

No overlapping cells. For every emitted cell, generate all its ancestors via

h3_cell_to_parentand check that none of them are also emitted. Result: 0 overlapping pairs across all four thresholds.Boundary mismatch proof. Find a place that

h3_latlng_to_cellassigns to a cell but that falls outside the cell'sh3_cell_to_boundary_geometrypolygon. This always finds one — because H3's geographic containment is approximate. It confirms that you should never useST_Intersectson boundary polygons to determine cell membership; always use the index.

Picking a threshold: what the numbers say

Benchmarked on 72,783,221 places from the Overture Maps dataset. Each view takes ~190 seconds to materialize regardless of threshold (the dominant cost is the res 10 grouping).

| threshold | cells | res range | avg/cell | stddev | Gini |

|---|---|---|---|---|---|

| 5,000 | 63,795 | 1–9 | 1,141 | 1,192 | 0.547 |

| 10,000 | 32,740 | 1–9 | 2,223 | 2,350 | 0.551 |

| 50,000 | 7,279 | 1–7 | 9,999 | 11,695 | 0.597 |

| 100,000 | 3,910 | 1–6 | 18,615 | 23,444 | 0.630 |

The Gini coefficient (0 = perfectly uniform, 1 = all places in one cell) measures distribution evenness.

Lower Gini = more uniform distribution. The sweet spot is the highest threshold where Gini hasn't degraded much — you get fewer cells (less scheduling overhead) without sacrificing workload balance.

5K→10K: free win. Doubling the threshold cuts cells in half (64K → 33K) with a Gini increase of just 0.004 (0.547 → 0.551). That's half the queue items for essentially the same distribution quality.

10K→50K: the unexplored gap. Gini jumps from 0.551 to 0.597 (+0.046) — a much bigger degradation. But somewhere in this range (say 20K–25K) the Gini likely stays close to 0.55 while cutting cells further. We didn't benchmark intermediate values; worth investigating for your workload.

50K+: uniformity breaks down. The stddev (11,695) exceeds the average (9,999) — cell sizes vary wildly. The finest resolutions disappear. Fine for "split the world into ~7K chunks," too uneven for balanced work queues.

100K: barely adaptive. Only 6 resolution levels remain, Gini hits 0.63. The bottom decile averages 10 places/cell, the top decile averages 74,575 — a 7,500x spread.

Watch for single-place cells. Every threshold produces cells with exactly 1 place — pure scheduling overhead. At t=5K there are 851 of them (1.3% of cells); at t=100K only 18. Not worth optimizing away, but good to know when sizing your queue.

The practical rule: set the threshold to the largest batch your worker can process without memory pressure.

Detailed breakdown for threshold = 10,000:

| res | cells | places | avg/cell | min | max |

|---|---|---|---|---|---|

| 1 | 640 | 572,928 | 895 | 2 | 9,953 |

| 2 | 794 | 1,503,620 | 1,894 | 1 | 10,000 |

| 3 | 2,893 | 6,640,106 | 2,295 | 1 | 10,000 |

| 4 | 7,751 | 18,321,519 | 2,364 | 1 | 9,995 |

| 5 | 8,634 | 18,201,626 | 2,108 | 1 | 9,997 |

| 6 | 7,486 | 17,339,166 | 2,316 | 1 | 9,986 |

| 7 | 3,878 | 8,889,703 | 2,292 | 1 | 9,896 |

| 8 | 657 | 1,303,188 | 1,984 | 1 | 9,956 |

| 9 | 7 | 11,365 | 1,624 | 357 | 2,217 |

32,740 cells covering 72,783,221 places, each place counted exactly once. Every max stays at or below the threshold. Full benchmark results including decile distributions and validation queries are in the companion repo.

Querying the view

-- Basic

SELECT cell, res, place_count FROM places_h3_t10000;

-- With geometry for rendering

SELECT cell, res, place_count,

ST_SetSRID(h3_cell_to_boundary(cell)::geometry, 4326) AS geom

FROM places_h3_t10000;

-- Filter to a bounding box (Los Angeles)

SELECT cell, res, place_count,

ST_SetSRID(h3_cell_to_boundary(cell)::geometry, 4326) AS geom

FROM places_h3_t10000

WHERE ST_Intersects(

ST_SetSRID(h3_cell_to_boundary(cell)::geometry, 4326),

ST_MakeEnvelope(-118.7, 33.7, -117.7, 34.4, 4326)

);

This is a good use case for a materialized view — the expensive part is building the multi-resolution counts; querying becomes a cheap SELECT with optional bbox filtering. Refresh on your data update schedule.

Conclusion

- Compute once at res 10, derive parents —

h3_cell_to_parentis a bitwise truncation, practically free. One expensivelatlng_to_cellcall per point instead of ten. - The 5K–10K threshold range is the sweet spot for backend work queues. Below 5K you're paying scheduling overhead for marginal uniformity gains. Above 50K the distribution becomes too uneven for balanced workloads.

- Use the index, not the polygon. H3's geographic containment is approximate —

cellToBoundarypolygons don't perfectly contain all index-assigned points. Always assign places vialatLngToCell, never viaST_Intersectson the boundary. - Validate your frontier. The overlap check (0 pairs across all thresholds) and total count match confirm the view is correct. Run them after any schema change.

Tested with PostgreSQL 17, PostGIS 3.5, and h3-pg 4.x on the Overture Maps dataset (72.8M places). Full benchmark code and queries: github.com/nikmarch/overturemaps-pg