Part 1 proved DuckDB-WASM can query Overture Maps GeoParquet directly from S3 via HTTP range requests — no backend, no ETL. Part 2 dealt with the aftermath: edge-cached file discovery, multi-theme loading, streaming progress, and UX that makes "slow by nature" data feel predictable.

Part 3: the browser becomes a query tool.

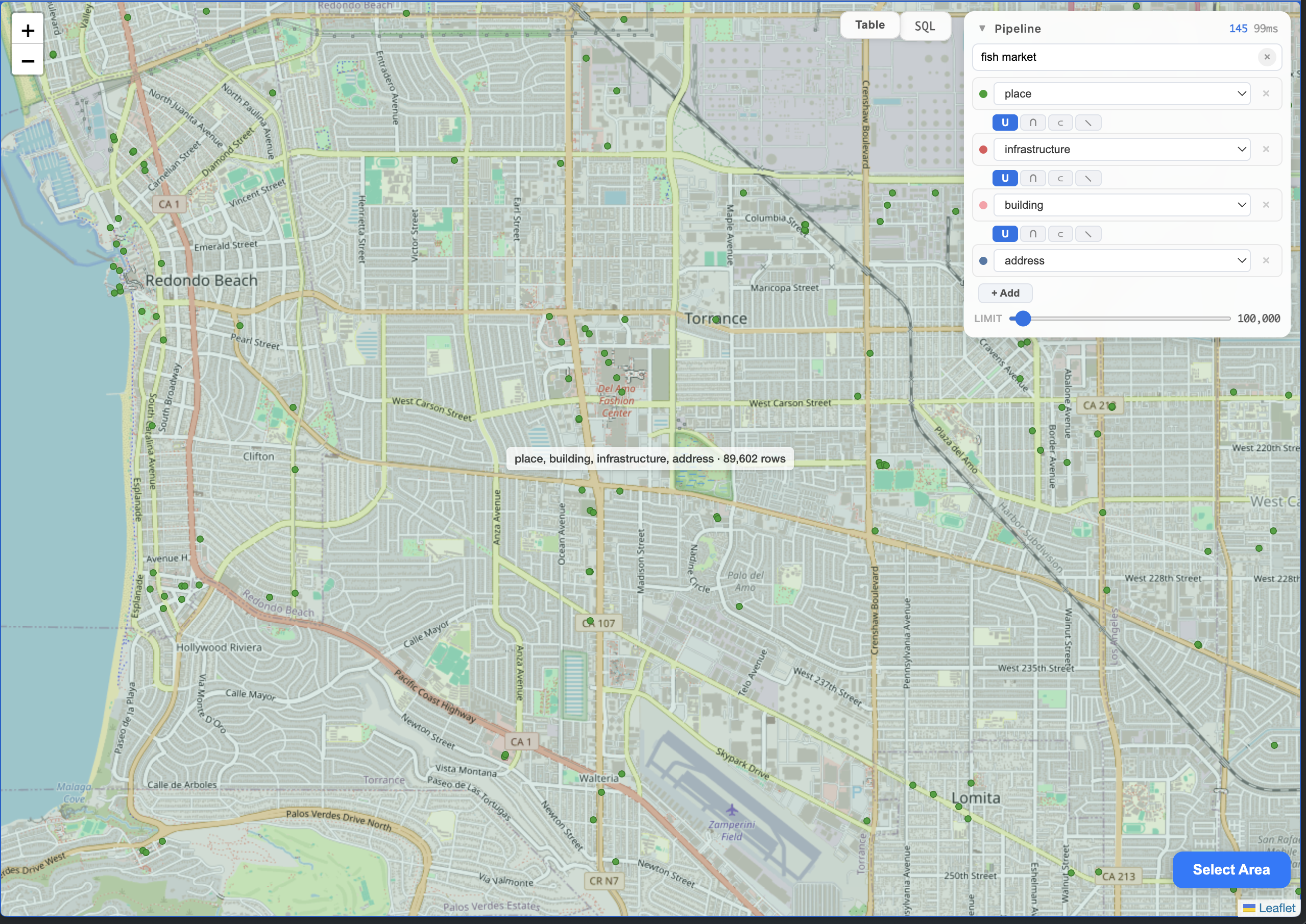

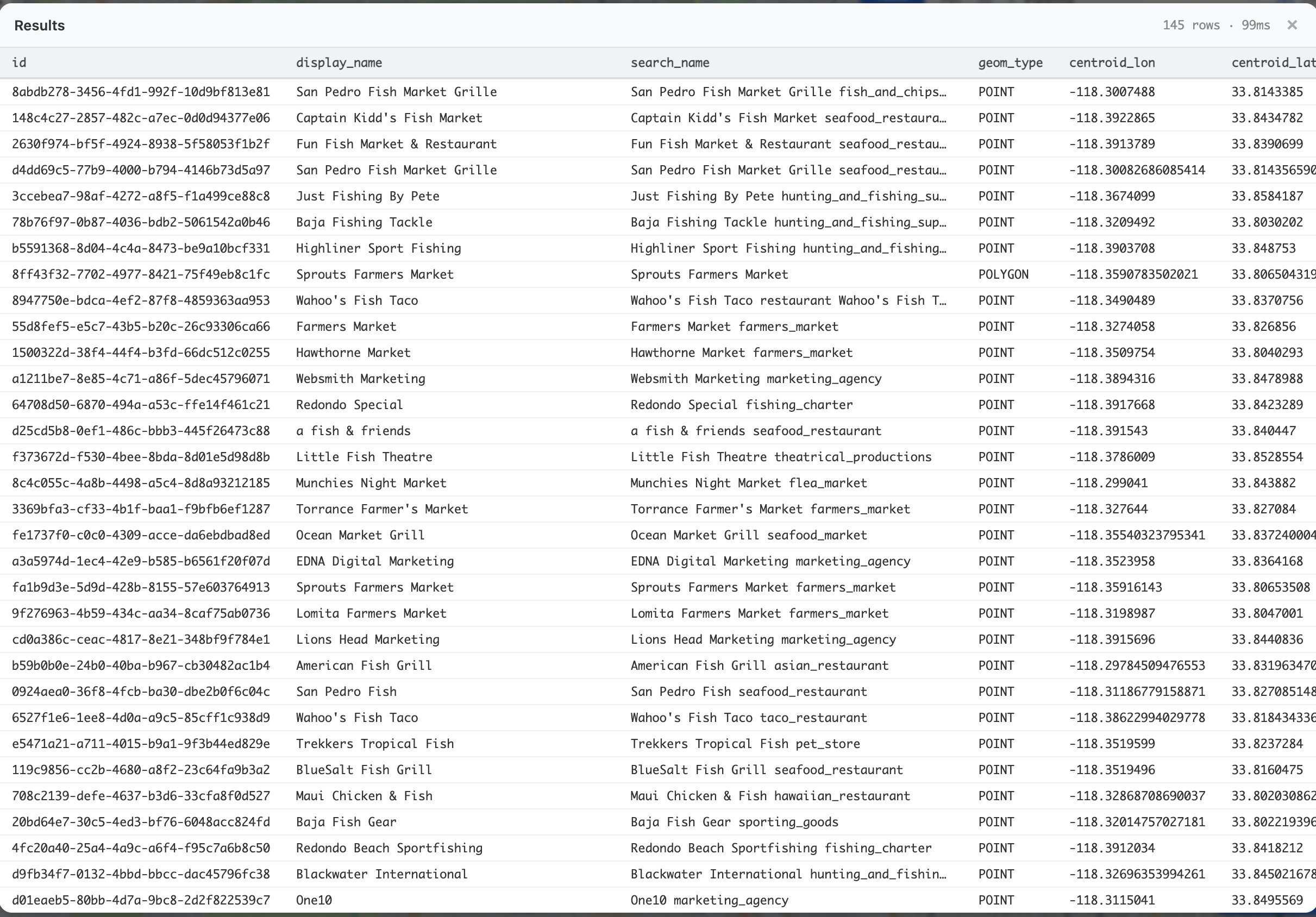

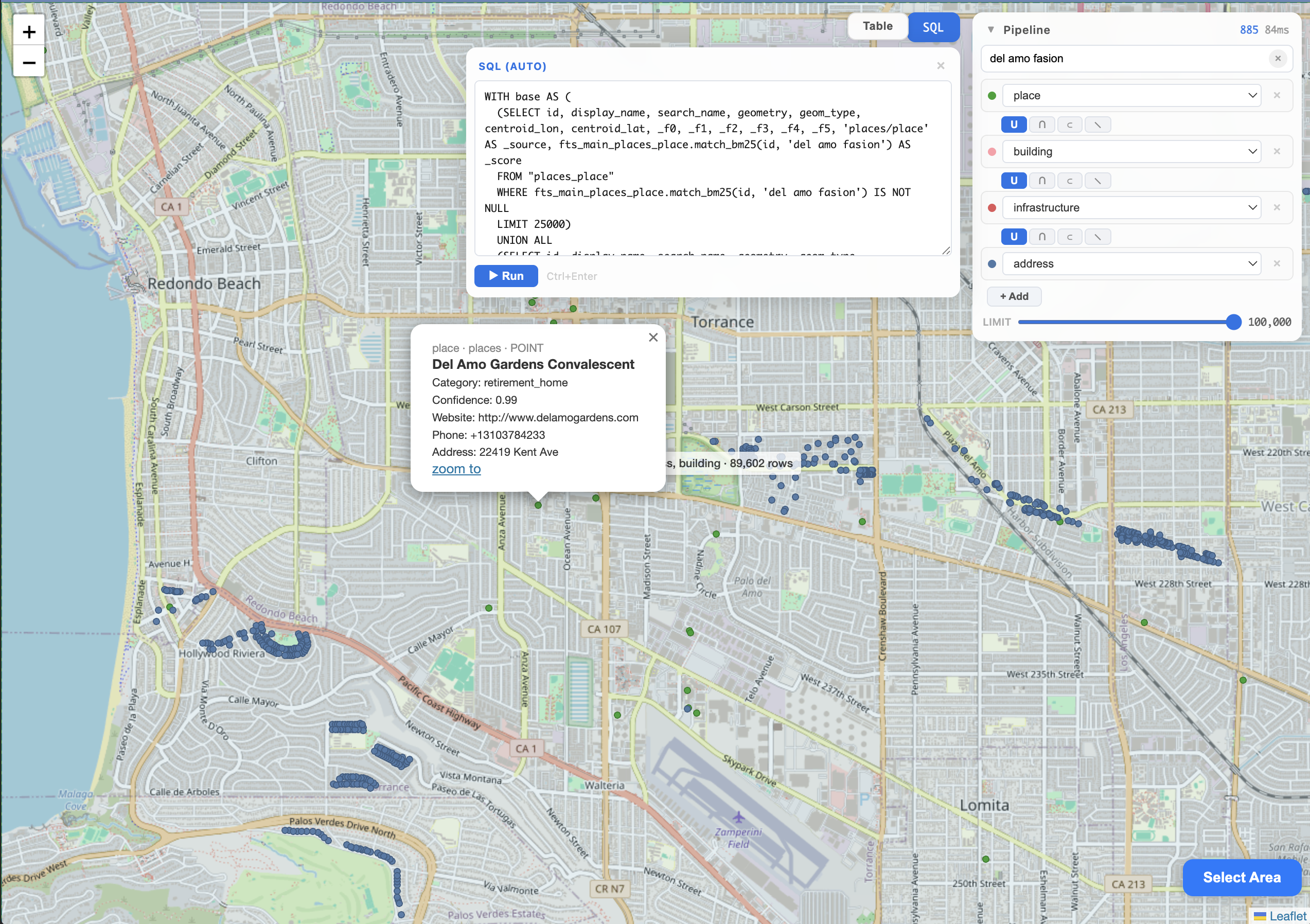

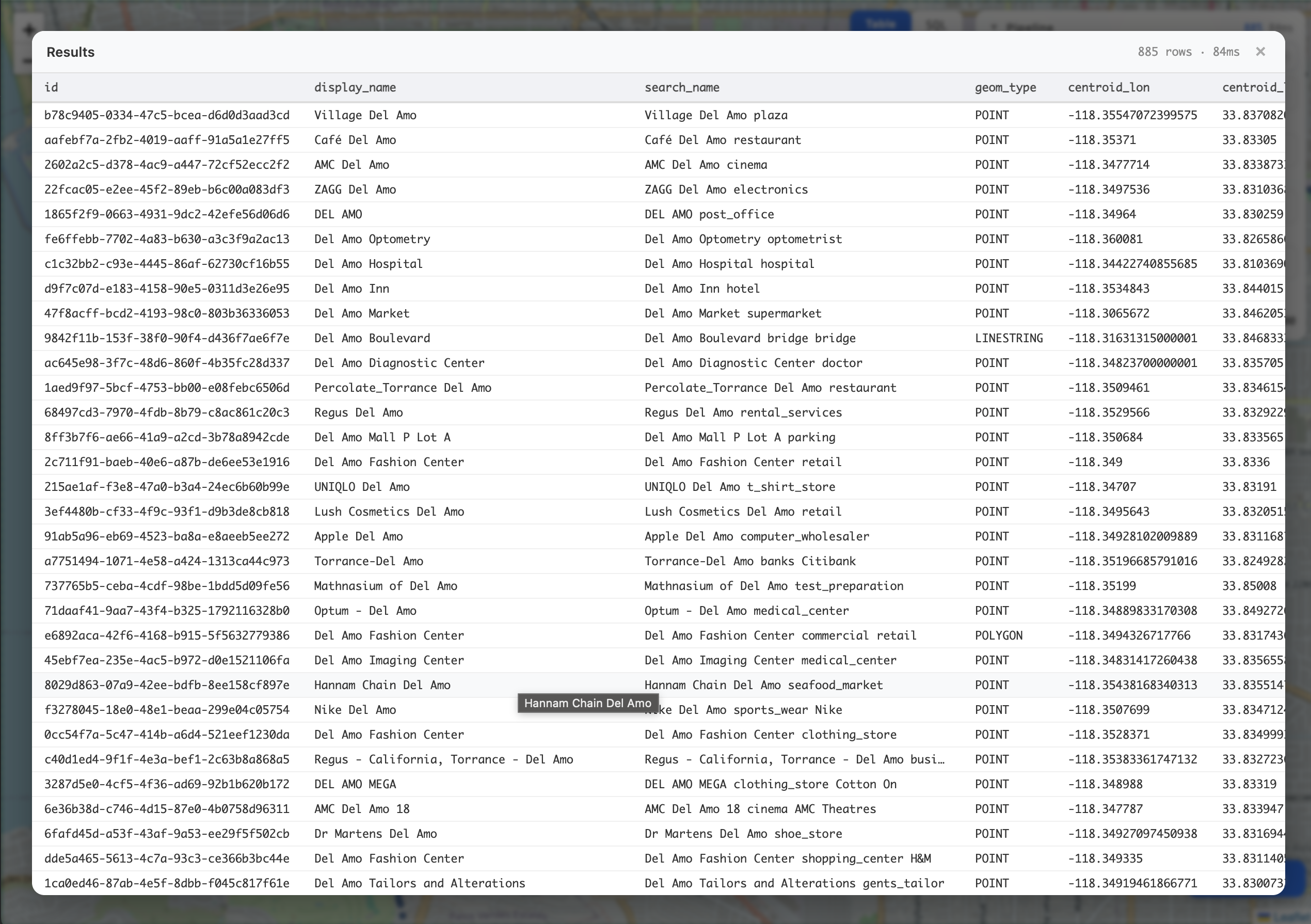

Search "fish market" across four themes. See 145 ranked results on a map. Switch to table view, sort by BM25 score. Edit the generated SQL. Share the whole thing as a URL. Close the tab, come back later — your session persists in DuckDB's IndexedDB storage.

POC disclaimer: This is a proof of concept. The SQL generation is string concatenation. The spatial joins are naive cross-products guarded by centroid-distance pre-filters. Some of the escaping would make a security reviewer nervous. The point is exploring what's possible when the query engine lives in the browser — not shipping production code.

Try it: maps.marchen.co

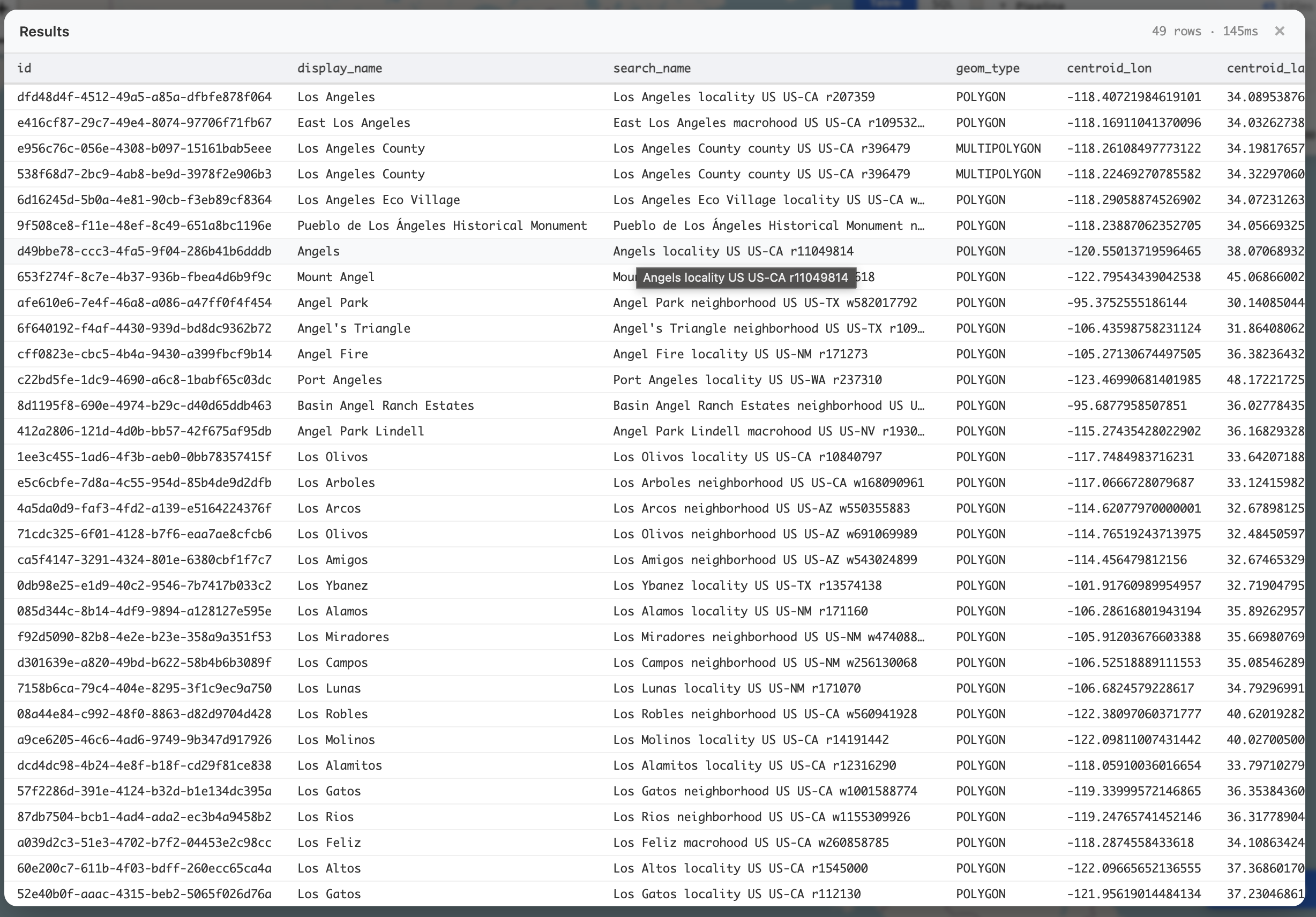

search_name column shows what FTS actually matched against.

match_bm25. Click a result to see category, confidence, website, phone — all from the Overture schema.

display_name is clean for UI. search_name includes category, brand, subtype — so "retirement_home" matches FTS even if the place name doesn't say it.Draw-to-Select: The Biggest UX Shift

Parts 1 and 2 re-queried data whenever the map viewport changed. Clever but infuriating:

- Pan slightly → query fires → spinner → flow broken

- Zoom in → data reloads with new bbox → different results

- No way to "hold" a dataset while exploring it

The fix: separate "what data I have" from "what I'm looking at."

- Click "Select Area"

- Draw a rectangle on the map

- Pick which themes to load

- Data loads for that rectangle — and stays loaded

- Pan and zoom freely. The pipeline queries local tables. No re-downloads.

The pipeline runner doesn't subscribe to map moveend events. It only reruns when pipeline state changes (search text, node config, SQL override). Moving the map is free.

This one change rewired the entire interaction model. You're not fighting the loading system anymore — you're choosing what to investigate.

A bigger area means a longer load. 61.8 seconds for Hermosa Beach to Lomita. You pay this cost once, then explore freely. The drawn rectangle shows exactly what you're getting.

The Reactive Filter Pipeline

Once data is loaded, you need to actually query it. That's the pipeline.

Each theme that finishes loading automatically gets a pipeline node — the first becomes a source, subsequent ones become combine with union. No manual setup; draw an area and pick themes, the pipeline appears.

Each node is a simple object:

{

id: 'p1',

type: 'source', // or 'combine'

table: 'places_place',

key: 'places/place',

op: 'union', // or 'intersect', 'within', 'exclude'

distance: 250, // meters, for 'within'/'exclude'

}

Toggle a theme, change the spatial operation, adjust the proximity distance — every change triggers compilePipeline() → new SQL → runPipeline(). A 300ms debounce prevents query-per-keystroke when typing search terms.

Every row in the compiled query carries a _source column ('places/place', 'buildings/building', etc.) — this enables per-theme coloring on the map and the grouped rendering in the pipeline runner.

Two Compilation Modes

Union-only (no spatial ops) — balanced sampling with per-source limits:

WITH base AS (

(SELECT id, display_name, search_name, geometry, ...,

fts_main_places_place.match_bm25(id, 'del amo') AS _score,

'places/place' AS _source

FROM "places_place"

WHERE fts_main_places_place.match_bm25(id, 'del amo') IS NOT NULL

LIMIT 500)

UNION ALL

(SELECT ...,

'addresses/address' AS _source

FROM "addresses_address"

WHERE fts_main_addresses_address.match_bm25(id, 'del amo') IS NOT NULL

LIMIT 500)

)

SELECT id, display_name, search_name,

ST_AsGeoJSON(geometry) AS geojson, ..., _score

FROM base

WHERE centroid_lon BETWEEN -118.43 AND -118.31

AND centroid_lat BETWEEN 33.79 AND 33.87

ORDER BY _score DESC

LIMIT 1000

Per-source limits (LIMIT 500 each) prevent one theme from dominating results. GeoJSON conversion happens in the outer SELECT, not the CTE — geometry stays native WKB until render time.

Spatial pipeline (intersect/within/exclude) — per-source limits drop, and EXISTS subqueries with centroid pre-filters handle the spatial logic:

matched_0 AS (

SELECT base.id FROM base

WHERE EXISTS (

SELECT 1 FROM "buildings_building" b

WHERE base.id != b.id

AND ABS(base.centroid_lon - b.centroid_lon) < 0.2

AND ABS(base.centroid_lat - b.centroid_lat) < 0.2

AND ST_Intersects(base.geometry, b.geometry)

)

UNION

SELECT b.id FROM "buildings_building" b

WHERE EXISTS (

SELECT 1 FROM base

WHERE base.id != b.id

AND ABS(base.centroid_lon - b.centroid_lon) < 0.2

AND ABS(base.centroid_lat - b.centroid_lat) < 0.2

AND ST_Intersects(base.geometry, b.geometry)

)

)

Both sides of the relationship get collected — if a place intersects a building, both appear in results. The ABS(centroid_lon - ...) < 0.2 guard is a cheap bounding-box pre-filter (~20km at mid-latitudes) that lets DuckDB skip the expensive ST_Intersects call for distant pairs.

(Would a spatial index be better? Yes. But DuckDB-WASM's R-tree support is spotty, and on a few thousand in-memory rows the pre-filter is fast enough. POC tradeoffs.)

The compile step is pure — state in, SQL string out. No side effects, fully testable:

test('FTS search generates match_bm25', () => {

const sql = compilePipeline(nodes, {

search: 'del amo',

ftsTables: new Set(['places_place']),

bbox: { xmin: -118.4, xmax: -118.3, ymin: 33.8, ymax: 33.9 },

limit: 1000,

});

expect(sql).toContain('match_bm25');

expect(sql).not.toContain('ILIKE');

});

Full-Text Search with DuckDB FTS

DuckDB's FTS extension brings real full-text search to WASM. Not ILIKE '%term%' substring matching — actual BM25 ranking, the algorithm behind search engines.

Building FTS Indexes In-Browser

When a theme loads into a DuckDB table, we build an FTS index:

export async function ensureFtsIndex(conn, tableName) {

try {

await conn.query(

`PRAGMA create_fts_index('${tableName}', 'id', 'search_name', overwrite=1)`

);

FTS_PRESENT_CACHE.set(tableName, true);

return true;

} catch {

FTS_PRESENT_CACHE.set(tableName, false);

return false;

}

}

The pragma creates a helper table (fts_main_<tablename>) that tokenizes the search_name column. FTS_PRESENT_CACHE (a Map) avoids re-checking information_schema on subsequent queries. The whole thing lives in the browser's WASM heap.

If FTS isn't available (older WASM build, bad schema), it fails silently and the pipeline falls back to ILIKE. If a match_bm25 call fails at runtime (stale index, extension not loaded), the pipeline runner catches the error, clears the FTS cache, and recompiles with ILIKE — graceful degradation, not a crash.

Enriching search_name

Early on, FTS only indexed the place's primary name. Searching "seafood" wouldn't find a place categorized as seafood_restaurant if its name was "Joe's Kitchen."

The fix: compose a search_name at load time from the theme's text-like fields. For places/place, this expands to:

SELECT

COALESCE(CAST(names.primary AS VARCHAR), '') AS display_name,

CONCAT_WS(' ',

COALESCE(CAST(names.primary AS VARCHAR), ''),

COALESCE(CAST(categories.primary AS VARCHAR), ''),

COALESCE(CAST(brand.names.primary AS VARCHAR), '')

) AS search_name,

...

FROM read_parquet(...)

display_name stays clean for the UI. search_name gets enriched with whatever text fields the theme defines — category and brand for places, street and postcode for addresses, subtype and class for buildings. The composition is dynamic: buildCacheSelect() iterates THEME_FIELDS[key] and includes fields that pass isSearchableField(), which excludes numeric labels (Height, Floors, Confidence, Speed limit) and noisy ones (Address, Website, Phone).

BM25 Ranking and Relevance Scores

The pipeline generates different SQL per table:

-- With FTS index: scored and ranked

SELECT ..., fts_main_places_place.match_bm25(id, 'fish market') AS _score

FROM "places_place"

WHERE fts_main_places_place.match_bm25(id, 'fish market') IS NOT NULL

-- Without FTS: unranked substring match

WHERE search_name ILIKE '%fish market%'

ORDER BY length(search_name) ASC

BM25 considers term frequency, document length, and corpus statistics. "San Pedro Fish Market Grille" scores higher than "Blackwater International" (which matched because its search_name includes hunting_and_fishing).

The _score column flows through to the table view — results arrive pre-sorted by relevance. Map and table share the same pipelineRows from Zustand — no duplicate query.

The SQL Panel and Table View

The SQL panel shows the compiled query in real-time. It's labeled "SQL (auto)" when pipeline-generated, "SQL (edited)" when you've modified it.

This is the escape hatch. The pipeline covers common patterns (union, intersect, proximity, search). But if you want a custom GROUP BY or a hand-tuned ST_Distance threshold — edit the SQL directly and hit Ctrl+Enter. The sqlOverride in Zustand takes precedence over the compiled query until you change a pipeline node (which clears it).

Map and table views render from the same pipelineRows in Zustand — switch freely, no re-execution.

Session Persistence: DuckDB as Its Own State Store

Here's a trick that fell out naturally: use DuckDB to persist its own session state.

DuckDB-WASM has persistent storage backed by IndexedDB. So instead of a separate persistence layer:

CREATE TABLE IF NOT EXISTS _session (key VARCHAR PRIMARY KEY, val VARCHAR);

INSERT OR REPLACE INTO _session (key, val)

VALUES ('pipeline', '[{"id":"p1","type":"source","table":"places_place",...}]');

Six keys get synced: pipeline, pipelineSearch, pipelineLimit, pipelineBbox, sqlOverride, loadedTables. A debounced Zustand subscriber writes changes to _session every 500ms. On init, restoreSession() reads all six rows and hydrates the store.

The _session and _load_history tables are preserved when you clear data for a new area — dropAllTables() checks a SYSTEM_TABLES Set and skips them.

URL ?sv= params take priority over session restore — shared links win. Otherwise, you pick up where you left off.

Shareable URLs

The ?sv= parameter in the URL hash encodes pipeline state — theme keys, drawn bbox, search text, limit — compressed using the browser's native CompressionStream('deflate-raw') and base64url-encoded. A typical payload: ~400 characters. Short enough for Slack.

An early version also encoded the compiled SQL. That broke immediately: shared links included match_bm25 calls, but the recipient's browser hadn't built FTS indexes yet — instant Catalog Error. The fix: share the ingredients, not the cooked query. The URL encodes what to load and what to search for; the recipient's pipeline recompiles fresh SQL after tables and FTS indexes are ready.

The restore flow handles the async dance: decode → draw bbox → load themes → wait for DuckDB tables to exist → build FTS indexes → compile pipeline → run query. Parquet downloads are slow; everything has to chain correctly.

The URL auto-syncs: a Zustand subscriber debounces at 500ms and calls encodeStateToUrl() whenever pipeline state changes.

Try this query (loads themes, draws the area, runs the search):

- "del amo" in South Bay LA — places + buildings, search "del amo" across the South Bay

- "fish market" in South Bay LA — places, buildings, infrastructure, addresses across four themes

Open it, wait for Parquet files to download (~30s), and the search runs automatically.

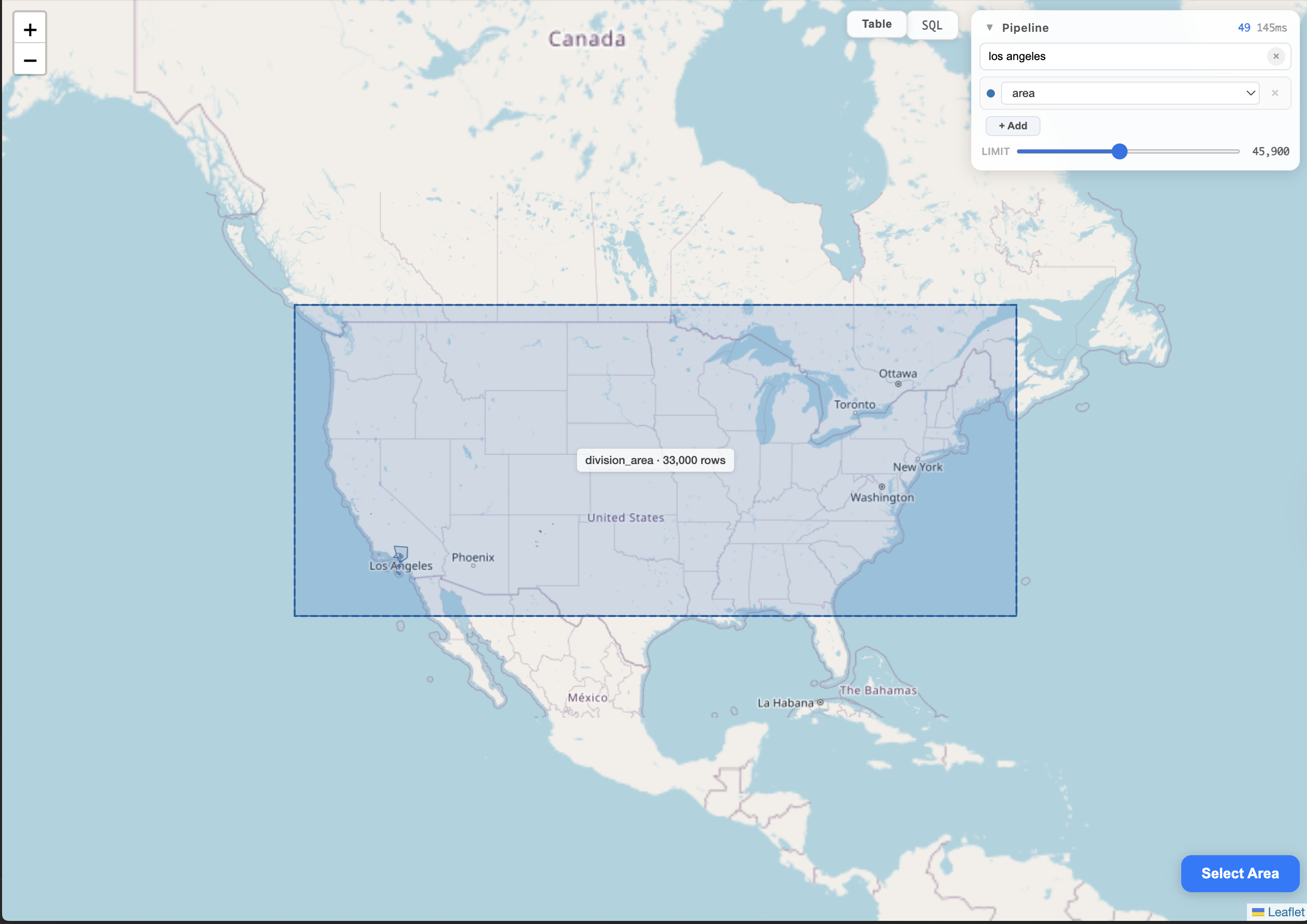

Pushing the Scale: 25K Division Areas Across the US

The same pattern works at continental scale. Draw a rectangle around the entire US, load division_area (administrative boundaries) — 33,000 polygons in about a minute. Then type "los angeles": FTS returns 49 matching areas in 345ms, ranked by relevance. Los Angeles city, Los Angeles County, neighborhoods, localities — each with geometry type, centroid, and canonical IDs.

Building polygons cover everything at this density. The renderer handles it with zOrderBySize() — sorts layers by bounding-box area, calls bringToBack() largest-first so the smallest geometry ends up on top and stays clickable. Division polygons also get dynamic fill opacity scaled inversely by area: California gets near-transparent, a city boundary gets more visible.

What Changed

Same stack (Cloudflare Pages + Workers, DuckDB-WASM, Leaflet). Different interaction model:

| Part 1–2 | Part 3 | |

|---|---|---|

| Area selection | Viewport auto-requery | Draw rectangle explicitly |

| Search | None | FTS with BM25 + enriched search_name |

| SQL | Hidden | Visible, editable, runnable |

| Session | Lost on refresh | Persisted in DuckDB _session table |

| Sharing | URL encodes map center only | Full state in URL hash |

What's Next

This iteration turned the viewer into something closer to a browser-native GIS workbench. The pattern keeps holding: push compute to the browser, use the edge for coordination, keep the backend at zero.

The app is still slow for initial loads. That's fine — it's a POC. The point isn't to compete with vector tiles for visualization speed. It's to show that a browser tab can be a legitimate query tool: search, filter, combine, inspect, persist, share — against a public data lake, with zero backend.

Part 1: SQL in the Browser: Querying 2.3 Billion Records with Zero Backend

Part 2: DuckDB-WASM & Edge Metadata: Multi-Theme Browsing, Streaming Progress & Honest UX

Try the map: https://maps.marchen.co