In Part 1, I proved the core trick: DuckDB-WASM can query Overture Maps GeoParquet directly from S3 using HTTP range requests. No backend database. No ETL. Just a browser tab doing real SQL.

That part was fun.

The hard part came next: user experience.

When your “database” is a pile of Parquet files on the other side of the planet, performance is slow by nature. You can optimize the bytes, but you can’t wish away physics, request fanout, or cold caches.

So iteration 2 was about a different goal:

Make slowness legible and controllable, while staying cheap enough to leave running forever.

If you just want to click around first: https://maps.marchen.co

TL;DR (the pitch)

Business wins

- Monthly release investigation: Overture ships monthly; this viewer stays dynamic (discover releases/themes from S3) so you can pull the latest release for a viewport and investigate interactively — without rebuilding anything.

- (It doesn’t auto-diff releases; it makes the latest release easy to browse.)

- Zero-DB infra: no database server, no ETL pipeline, no API layer — cheap enough to leave running.

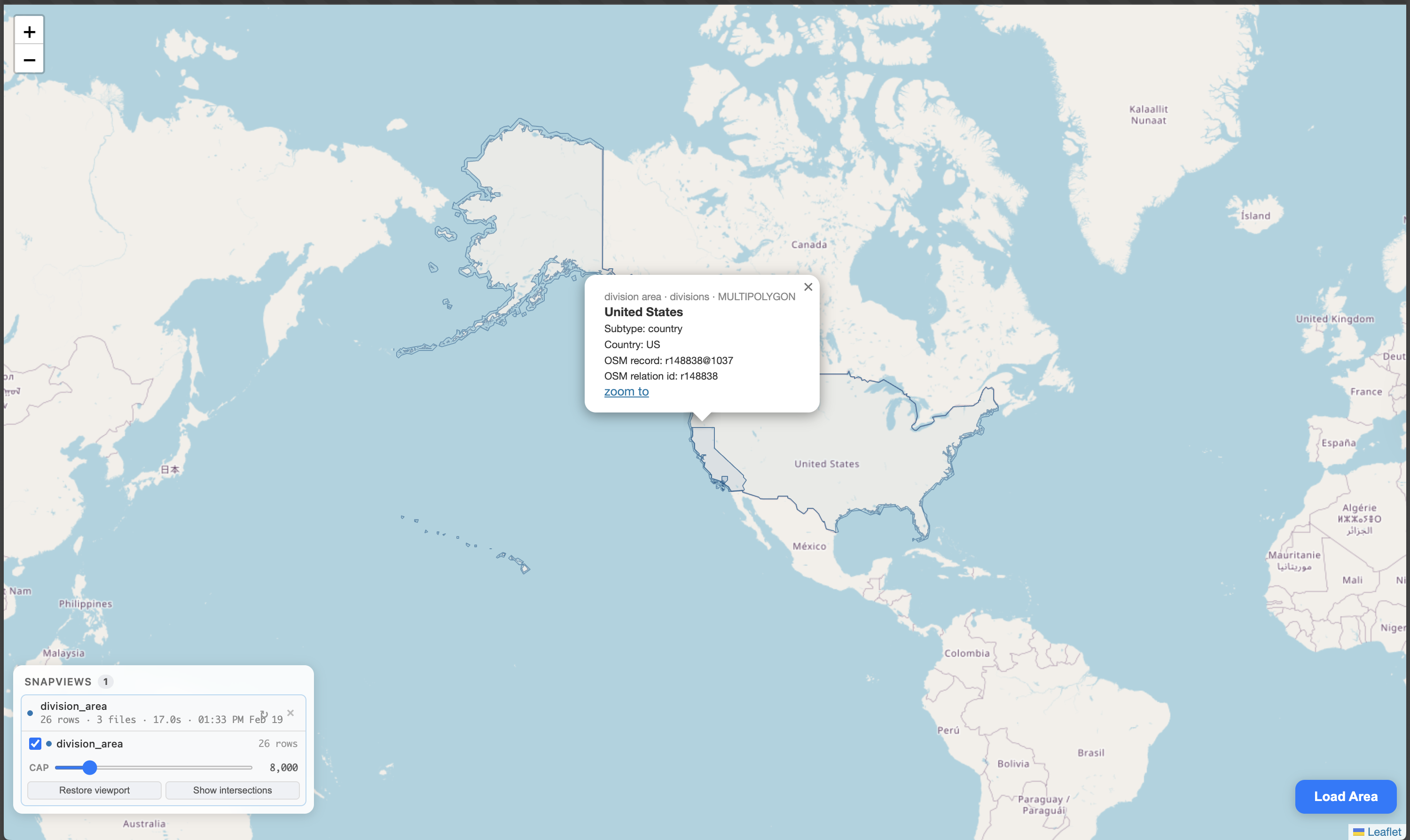

- Boundary/ID discovery: click admin divisions (cities/counties/states) and get canonical OSM relation IDs — a practical replacement for manual Nominatim/Overpass scavenger hunts.

What you can actually do in the UI

- Load & compare multiple themes like buildings, segments (roads), addresses, places, infrastructure.

- Run quick intersection checks to validate alignment (“are these layers really intersecting or am I hallucinating a join?”).

- Inspect individual features (geometry + subtype/class/attrs) to turn “map vibes” into debuggable data.

Technical wins

- DuckDB-WASM in the client: real SQL over (Geo)Parquet, no backend DB.

- Edge metadata, not edge compute: cached file discovery + CORS + Range proxy so Parquet-over-HTTP is feasible.

- UX patterns that work for “slow by nature” data: release/theme selection, streaming progress, caps, inspection tools.

Net result: a thin client for the Overture Maps data lake where the query engine is the browser — honest about latency, still usable.

The UX Problem: Parquet-over-HTTP Doesn’t Feel Like a Database

A real database gives you consistent feedback loops:

- Query → results (usually fast, usually repeatable)

- Explain/inspect → confidence (you can see what the engine is going to do)

- Cache/warm state → speed (repeat a query and it typically gets cheaper)

That’s the mental contract people bring to “SQL.”

Parquet-over-HTTP in the browser is weirder:

- You kick off a query.

- The browser explodes into hundreds of range requests.

- Results arrive in batches.

- Sometimes you hit local cache (fast), sometimes you miss (slow), and the UI doesn’t naturally explain why.

So I stopped trying to make it “fast” and started trying to make it honest.

The New Mental Model: Three Caches, One Job

This iteration ended up with three distinct caching layers:

1) Edge cache: Which files matter?

The most important edge optimization is still file filtering — when you can do it.

In the “fancy” version of this project, the browser asks the edge:

- “Given my viewport bbox, which parquet files even intersect?”

…and the edge answers quickly because it keeps a spatial index of file bboxes built from Parquet metadata.

That index is keyed by {release}/{theme}/{type} and cached at the edge via the Cloudflare Cache API — no Durable Objects, no KV, no R2. The worker builds it lazily on first request (reading Parquet footers; tooling details are covered in Part 1), then caches it for a day in production (1 minute in dev). Subsequent requests are instant lookups.

The same stateless worker also provides:

- a same-origin S3 proxy with correct CORS headers

- HTTP Range passthrough (so DuckDB-WASM can do Parquet footer reads)

- lightweight endpoints to discover releases and themes from S3 prefixes

That’s the “minimum viable edge”: make the browser’s SQL possible, without pretending the edge is a database.

2) Browser cache: What tables do I already have locally?

DuckDB tables in WASM are the local cache.

The mental model is simple:

- Pick a viewport you’re about to investigate

- Load the themes you need for that viewport (buildings, segments, addresses, etc.)

- Iterate inside the cached viewport: zoom/pan within it and re-render, paginate, and inspect — without re-downloading the world

Behaviorally, it works like this:

- If the current viewport is contained by the last loaded bbox for a given release/theme, we don’t go back to S3.

- We just query the existing local DuckDB table and redraw.

- When you move outside what you’ve cached, that’s when you pay the “Parquet-over-HTTP” cost again.

It’s not HTTP caching; it’s a stateful local database. That’s the point of DuckDB-WASM: exploration becomes cumulative instead of stateless.

3) Visual cache: Can the UI explain what’s happening?

The edge and the database can be “right” and the app still feels broken.

So the UX work here is about making state visible:

- what you asked for (theme, release)

- what’s currently loading

- what limits/caps are active

- what you can inspect, validate, and trust

Product Shift: From “Places + Buildings” to “Any Theme, Any Type”

Part 1 was hardcoded around places/buildings.

Part 2 turned the viewer into a general Overture release browser:

- release dropdown (discovered dynamically from S3)

- theme/type list (discovered dynamically from S3)

- per-theme limits

- per-theme popups with type-specific fields

This matters because it changes how you think about the app:

It’s not a demo.

It’s a thin client for a public data lake.

Performance Truth: Slow, Predictable, Cheap

This architecture is not fast.

It’s predictable.

Why it will always be slower than tiles

Vector tiles are precomputed for visualization.

This app is not a visualization-first pipeline. It’s an ad-hoc query engine:

- you run real SQL

- against real Parquet

- in the browser

That buys you a superpower (no backend, no ETL, no server bill), but the cost is latency.

Why it’s still worth it

Most data tools start with:

- a database server

- a data import

- an API

- a cache

- a bill

This starts with:

- a static site

- a tiny stateless Worker with Cache API

- and the user’s browser doing the heavy lifting

The edge isn’t there to compute your answers.

The edge is there to make your answers possible.

Visual Design for Dense Geometry: Opacity + Z-Order

Once you start mixing multiple layers, the map becomes unreadable fast:

- huge polygons cover everything

- points disappear

- roads turn into purple spaghetti

The fix is boring and effective:

- big polygons use very low fill opacity

- smaller geometries get higher z-order

- limits/caps keep the renderer alive

Bonus UX: Intersection Highlighting (and Why It’s More Than a Visual Toy)

Once you have multiple themes loaded, you inevitably ask:

“Are these layers actually aligned, or am I hallucinating a join?”

A small intersection/highlight mode turns that into a one-click check.

And once you have intersections… you can turn it into a discovery tool.

My Favorite Workflow: Find the OSM / Nominatim IDs That Intersect My Area

This is the moment the app stopped being “a map” and became a research tool.

Instead of guessing which OSM relation represents “the place I’m looking at,” I can load the admin boundaries (division_area) and click what I see. The UI immediately shows the canonical identifiers (OSM relation ids / records) that intersect my area.

That replaces a whole scavenger hunt across Nominatim, Overpass, and random wiki pages.

Why the Edge is the Right Place for “Metadata Compute”

There’s a specific sweet spot for the Cache API here:

- building a spatial index from Parquet footers is expensive enough that you don’t want to redo it per request

- but it’s also metadata-only, so it’s cheap to cache and rebuild

Early versions used Durable Objects for this. Turns out, that’s overengineering. The Cache API does the same job: the worker builds the index on first request, caches it at the edge, and subsequent requests are instant. No persistent state to manage, no sqlite classes to migrate, no OOM issues in local dev.

The app delegates as much as possible to the edge, but it delegates the right thing:

- coordination (file lists, indices)

- not computation (full dataset queries)

This keeps the infra bill at ~zero.

What’s Next

I’m not pretending this replaces tiles.

But as a pattern, it feels important:

- Use open data directly.

- Move query engines to the client.

- Use the edge for cached metadata.

- Make the UI honest about caching.

Stay tuned: search at the edge (without building a backend)

The next iteration I want to try is new kinds of search powered by the edge — not “run queries on the worker,” but push more discovery closer to the data:

- better file pre-filtering (spatial + theme/type + cheap attribute hints)

- endpoints for lightweight attribute discovery (e.g., available subtypes/classes, popular tags, field presence) so the browser can offer better filters

- fast endpoints that help the browser decide what to fetch, not what the answer is

Same rule as before: the edge coordinates, the browser computes.

The surprising part is how little code you need to make it feel coherent.

The app is slow.

And it’s still good.

Because it’s slow in a way the user can understand.

Part 1: SQL in the Browser: Querying 2.3 Billion Records with Zero Backend

Try the map: https://maps.marchen.co